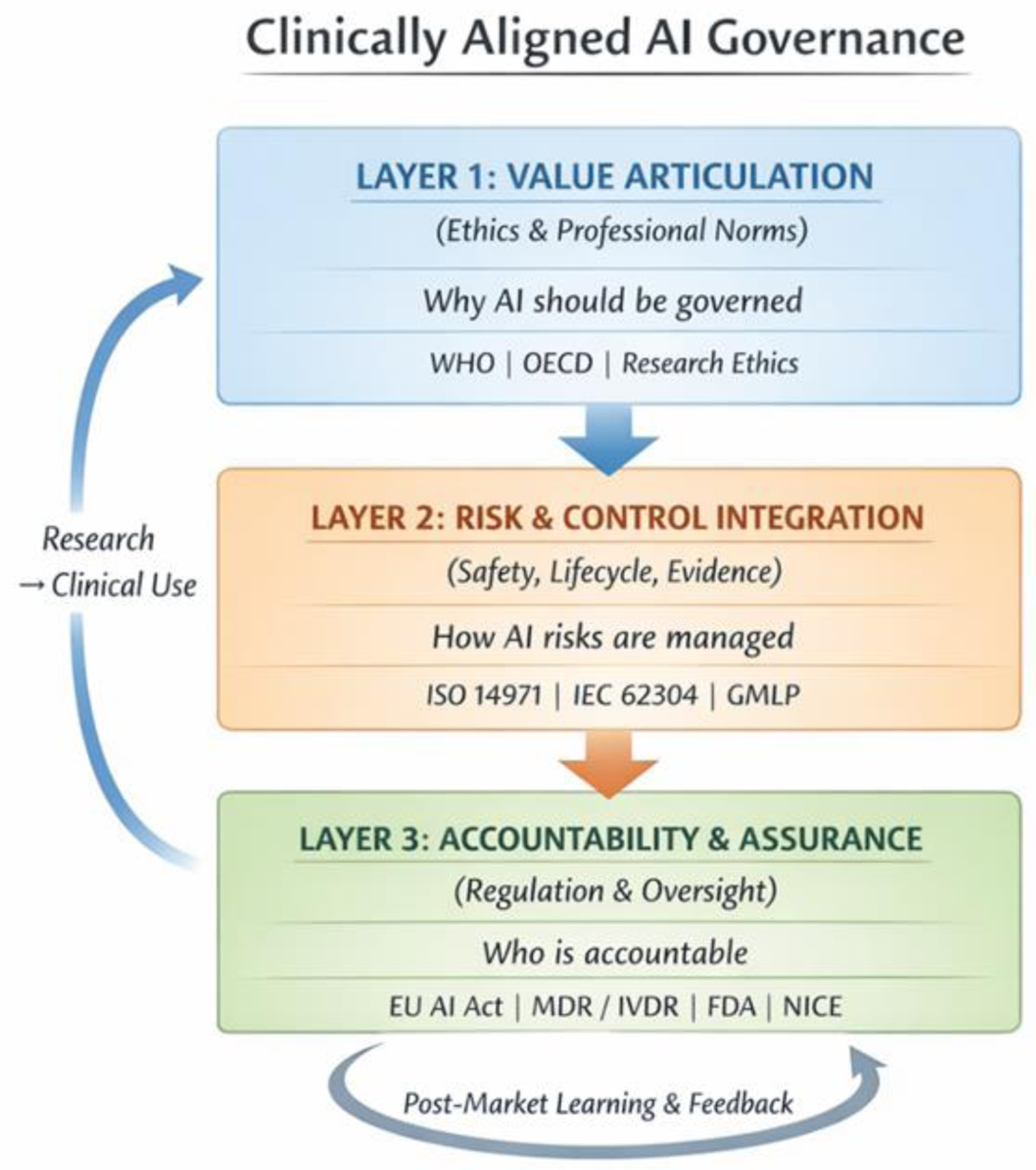

↓ Figure 1. Clinically aligned AI governance: three-layer governance architecture. The architecture comprises three layers constituted by the organizational Three Lines model: Layer 1 (value articulation and clinical purpose; owned by executive and clinical leadership, first line of defense), Layer 2 (risk and control integration; anchored in patient safety, quality, and research governance functions, second line), and Layer 3 (accountability, and assurance; led by internal audit, regulators, and independent reviewers, third line). Bidirectional feedback and escalation pathways connect all three layers. Bidirectional arrows indicate feedback and escalation pathways between layers. Each layer bridges directly to an existing clinical governance structure, embedding AI oversight within, rather than parallel to, established systems of clinical care and accountability.