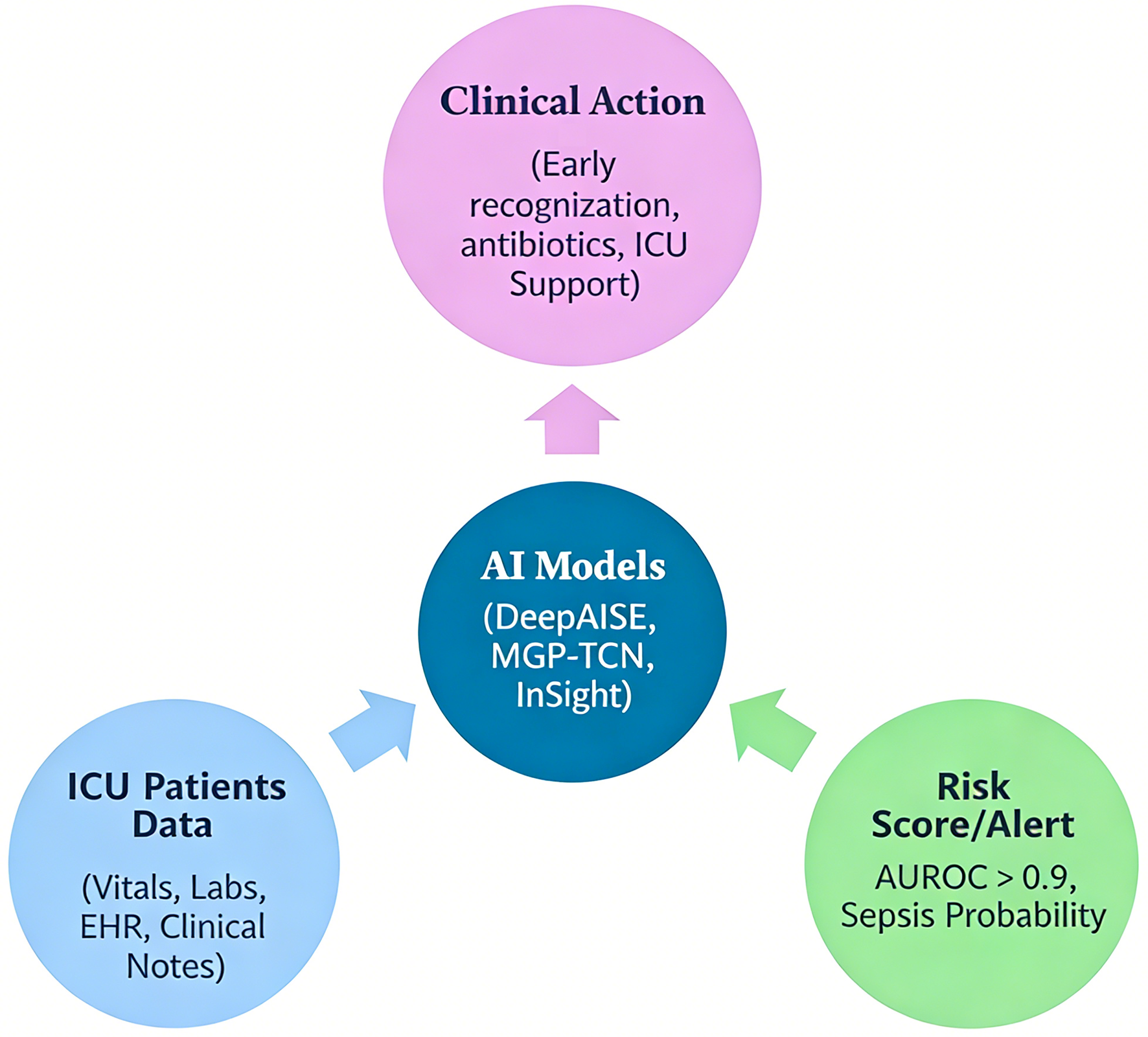

↓ Figure 1. Workflow of artificial intelligence (AI)-based sepsis prediction in the intensive care unit (ICU). This figure illustrates the end-to-end pipeline of AI-driven sepsis prediction. Patient data, including vital signs, laboratory values, and electronic health record (EHR) inputs, are continuously collected and pre-processed (data cleaning, normalization, and handling of missing values). These data are then fed into machine learning or deep learning models (e.g., random forest, recurrent neural networks, or temporal convolutional networks), which generate dynamic risk scores in real time. The output is integrated into clinical workflows via EHR-based alert systems, prompting early clinical evaluation and intervention (e.g., antibiotic initiation, fluid resuscitation). The figure also highlights feedback loops for model refinement and the role of clinician oversight to ensure safe and effective implementation.