Clinically Aligned AI Governance: Integrating Ethics, Risk, and Regulation in Healthcare

DOI:

https://doi.org/10.14740/aicm20Keywords:

Artificial intelligence, Clinical governance, Healthcare regulation, Patient safety, AI lifecycle, Accountability, Risk managementAbstract

Background: Artificial intelligence (AI) systems increasingly influence clinical decision-making across healthcare, yet current governance approaches often treat AI as a technical compliance issue rather than an integral component of clinical care, creating critical gaps in patient safety, accountability, and trust. This paper examines how AI can be governed effectively in healthcare, addressing two questions: what distinguishes AI governance in healthcare from other sectors, and how can AI governance be integrated into existing clinical systems to manage risks across the full lifecycle.

Methods: Through systematic analysis of global normative frameworks (WHO, UN), cross-sector governance standards (OECD, NIST), and healthcare-specific regulations (EU AI Act, FDA guidance), alongside synthesis of empirical evidence from deployed clinical AI systems, the paper develops a layered governance architecture for healthcare organizations. Global and regional regulatory documents, published AI governance standards, and empirical case studies from healthcare AI deployments were reviewed and synthesized.

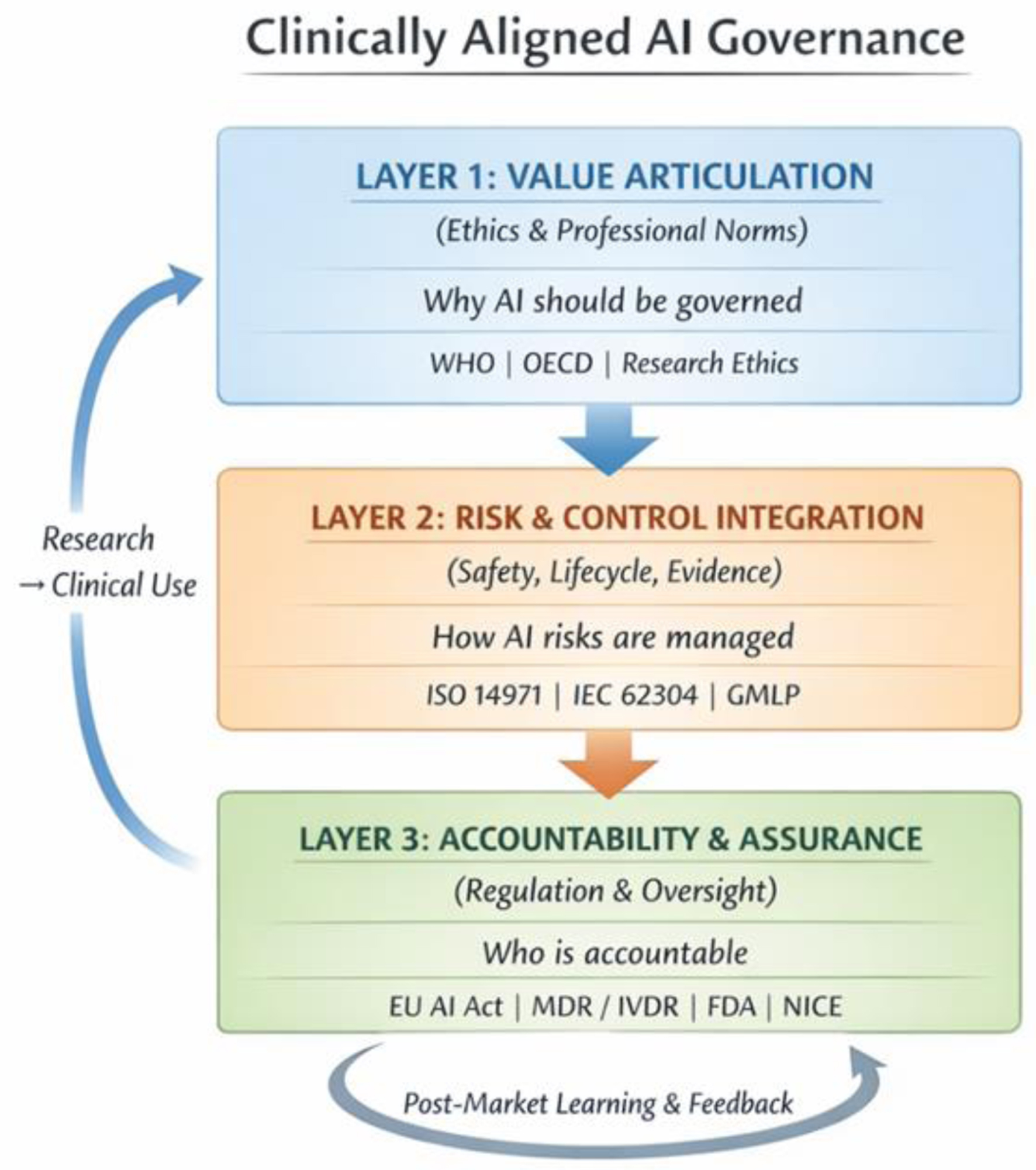

Results: Governance failures in healthcare AI arise less from ethical or regulatory gaps than from weak institutional translation into effective risk management and oversight practices. A three-layer clinically aligned governance architecture is identified for health care organizations, constituted by the three lines of the organizational oversight model. Layer 1—value articulation and clinical purpose, owned by executive and clinical leadership—establishes why AI should be used and under what conditions, bridging ethical intent to existing clinical policy and procurement structures. Layer 2—risk and control integration, anchored in patient safety, quality, and research governance systems—translates clinical intent into operational safeguards across the AI lifecycle. Layer 3—accountability, and assurance, led by internal audit and regulatory oversight functions—sustains institutional trust and continuous learning over time. Each layer bridges directly to an existing clinical governance structure, ensuring AI oversight is embedded within, rather than parallel to the established systems. Healthcare organizations adopting this lifecycle-oriented, clinically integrated approach are better positioned to ensure safe, explainable clinical decision-making while preserving professional judgment and patient trust.

Conclusions: This paper introduces clinically aligned AI governance—a layered governance architecture, embedding AI oversight within existing clinical, research, and patient safety systems—as foundational institutional infrastructure for healthcare. The architecture bridges the gap between generic AI governance frameworks and healthcare-specific needs, addressing persistent translation failures that undermine safe and accountable AI use in clinical settings. These findings have practical implications for healthcare leaders, regulators, and policymakers implementing clinical AI systems.

Published

Issue

Section

License

Copyright (c) 2026 The authors

This work is licensed under a Creative Commons Attribution 4.0 International License.